Let’s clearly outline the purpose of A/B testing and how it performs.

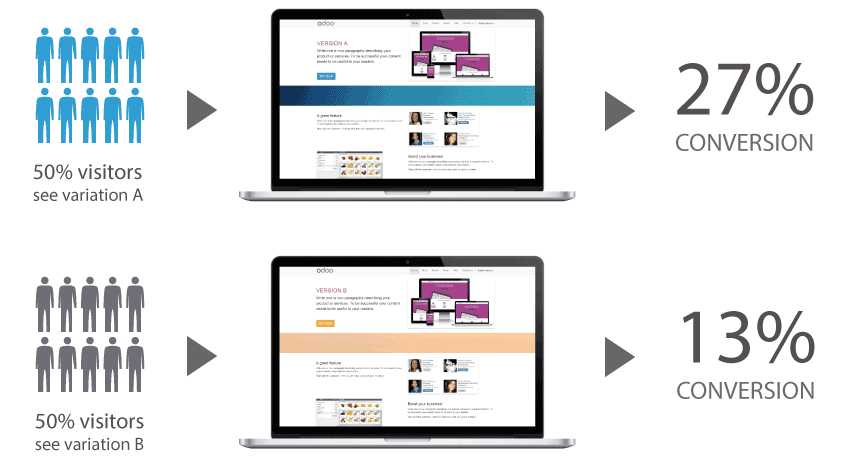

A/B testing, aka split testing, is a technique for comparing proposed hypotheses or modifications to a web page to the current design and determining which method generates the most significant results.

Users are divided into two groups at the most fundamental level, with one group viewing your previous version and the other viewing your new, enhanced design.

Both variations are evaluated against one another to see which one gets the most number of conversions on your website.

This allows you to experiment with fresh design concepts and compare their performance to your existing web pages.

Note:

We’ve conducted ab test development for a number of our clients at Brillmark. While the experiments have yielded some notable outcomes, there are also some unmentioned drawbacks. This post discusses the advantages and disadvantages of A/B testing and how and when it should be conducted.

Why do A/B tests?

Nowadays, it is relatively simple to set up and execute tests. Once configured, they may be left running with little maintenance and will automatically notify you of a winning variety.

To begin, let’s examine some of the reasons for A/B testing.

These tests produce quantifiable results, which means they are less susceptible to interpretation and provide more definitive answers than other forms of testing.

This suggests that if the test is conducted correctly, the winning variant is almost certain to produce results.

Finally, tiny modifications can have a significant impact. Numerous examples exist of how little changes, from text to color on webpages, resulted in substantial gains.

Studying case studies to understand how little tweaks to your website’s code may considerably influence its speed.

Here are the best reasons to do A/B testing:

User Engagement Boost

While working with clients, we may see that they have plenty of traffic on their website, but the conversion rate is meager, which means the traffic is wasted.

There can be many hypotheses as to why the conversion is this low.

Every day, a new visitor to your website sees your user interface for the first time. They arrive with a purpose.

To better serve your visitors, learn about your service or product, purchase a product, read blogs, or explore the website. Several common elements operate as pain spots.

Pain spots might be a page’s subject line, headline, graphics, call-to-action button, languages, layouts, or even typefaces.

These seemingly small features on a website may appear insignificant, but their inability to meet goals will lead to a negative user experience.

So at Brillmark, we develop ab test for these tiny factors, demonstrating what influences user behavior. We upgrade the experience by addressing visitors’ concerns and boosting user engagement and success.

Low Bounce Rates

While evaluating our client’s website, we check the bounce rate, as it is a crucial factor in assessing the website’s performance.

Several causes for your website’s high bounce rate may include too many options, misaligned expectations, difficult navigation, and excessive use of technical jargon.

A/B testing helps determine which features on your website will improve the user experience, reduce bounce rate, and increase conversion.

Enhanced ROI

With our experience in AB testing for the last 10 years, not only us but all the optimizers out there will agree that the expense of generating high-quality visitors for your website is prohibitively expensive.

A/B testing is the best approach to find out what works best to convert more leads. Using A/B testing data, you can optimize current and new visitors by making tiny adjustments that enhance conversions and ROI.

Statistic-Based Improvements

We at brillmark make sure that the hypotheses formulated for the test are all data-driven so that you can quickly figure out the ‘winner’ & the ‘loser’ of the trial, as there is no room for guesswork, gut feelings, or instincts.

The data-driven nature of A/B testing, including time spent and demo requests, will reveal the winner and loser.

Redesign the Website for Profit and Low-Risk

While making website changes, developers’ primary focus is to make changes without modifying the entire webpage; A/B testing allows for incremental modifications.

Your current conversion rate may be decreased. A/B testing helps you focus on your assets for maximum ROI with few changes.

Updates might range from modest text or color changes in a CTA button to fix a website. The choice between the two forms should always be based on data from A/B testing.

You may learn whether the new feature will affect visitors’ behavior or the purchase funnel by deploying it as an A/B test in the page’s duplicate.

So, you may profitably rebuild your website without making substantial modifications.

However, there are drawbacks to depending on A/B testing to determine your site’s design.

What are some of the disadvantages of A/B Testing?

Duration:

One of the primary criticisms about AB test development is the time required to see results.

For example, it’s fantastic if you’re Amazon and your site has millions of monthly visits. However, if you operate a smaller site or want to test a page that receives less traffic, finding a winning variant might take longer.

This becomes much more problematic if the modifications you’ve made are relatively minimal.

While modest modifications might have a significant impact, they frequently have a trivial effect on your outcomes. Conducting tests with tiny adjustments can often be inconclusive, regardless of the duration of the test.

Cloaking:

Another area to keep an eye out for while conducting your testing is cloaking.

Cloaking is a form of black hat SEO, in which the information displayed to a search engine crawler differs from the one shown to a user.

For example, including keywords in the content only when search engine crawlers view the website, not when a human visitor does.

A/B testing, by displaying multiple versions of a page to different users, runs the danger of being seen as cloaking by search engines.

This should not be an issue as long as you adhere to Google’s stated guidelines, but it is something to consider if you want to conduct testing.

Conversion First:

While testing, sometimes we come across clients who, after seeing some benefits after quantitative testing, start pushing towards more conversion.

That can dehumanize your consumers, reducing them to lab rats in your trials.

A disadvantage of depending exclusively on quantitative testing is that it frequently results in a ‘conversion first’ mindset.

While a conversion-driven mindset makes commercial sense, it should not come at the price of the user experience as a whole.

Concentrating only on conversions might result in short-term ‘solutions’ that do more harm than good in the long run.

Dark Pattern:

There are very severe ethical issues surrounding just focusing on converting your consumers.

There is a distinction between incorporating Dark Patterns (user interfaces meant to deceive users into performing activities they did not want to achieve) into your design and performing conversion rate optimization.

Conversion optimization should be a win-win scenario for both customers and website owners, with improved purchase experiences benefiting both parties.

Tricks and tactics used to extract additional money from users against their will may increase online sales.

Still, the costs of extra customer service to handle complaints and the negative reputation that dark patterns bring to your brand will almost always outweigh the benefits.

While there is no long-term benefit to employing Dark Patterns in your design, there is a desire to utilize some of these dubious approaches during A/B testing.

They are likely to increase conversions in the near term and isolation, but usually at a cost.

Obsessed with A/B Testing:

It’s easy to become fascinated with A/B testing and run an endless number of tests to find solutions to all of your design issues.

Still, the problem often extends beyond what can be evaluated on a single webpage.

For Example, sometimes, the hypothesis created around the less CTA click is an issue with the CTA design, color, placement, content, etc.

Still, people are not clicking the CTA because of more significant problems like the potential that visitors may not like the product, not understanding the outcome, overpricing, sales by competitors, speed of the website, and the list.

In general, relying exclusively on A/B tests might result in an obsession with minute design tweaks and, as a result, a loss of sight of the overall picture.

Does A/B Testing Hurt SEO?

There is widespread misunderstanding on whether it is acceptable to perform an A/B test based on whether it would alter the website’s ranking.

Google has clarified in their article “Website Testing And Google Search” that A/B testing will not affect a website’s search engine ranking.

However, if A/B testing is utilized improperly or overused, it can jeopardize your website’s search engine results.

Google has released guidelines for conducting successful testing with minimal influence on a website’s search rank and performance.

No Cloaking

This is the practice of displaying different content to visitors than to Googlebot. It violates the Webmaster guidelines, regardless of whether you run the test or not.

In that case, the test’s results would be irrelevant. Therefore, it’s preferable not to take the chance.

Conduct an Experiment for an appropriate length of time

Conducting testing for longer than necessary, mainly if you deliver a single version of your website to many users, might be interpreted as an attempt to fool online search engines.

A reliable testing tool will indicate when sufficient data has been collected to draw a sound judgment.

Google recommends refreshing your site and removing all test versions of your site when a test concludes, as well as avoiding running tests for unnecessary lengths of time.

Use rel=” canonical”

Google suggests using the rel=”canonical” property on all alternative URLs when performing tests with different URLs to direct all variants to the original or control version of the webpage.

Using the rel=”canonical” meta tag is recommended instead of the noindex meta tag, as it clearly shows your goal while testing.

As a result, search engines will recognize that all test URLs are near duplicates of the first URL and should be merged, with the first URL as canonical.

This should prevent the Googlebot from becoming confused by several page variations.

For instance, if you were testing a variation of your homepage; you don’t want search engines to ignore your homepage; you just want them to recognize that all the test URLs are near copies or variants of the original URL and should be grouped as such, with the actual URL serving as the canonical.

Using noindex instead of rel=”canonical” in such a circumstance might occasionally have unintended consequences; for example, if for some reason we choose one of the variant URLs as the canonical, the “original” URL might also get dropped from the index, since it would get treated as a duplicate.

Redirect Consistently to 302 Redirects, Not 301

A 302 redirect is temporary, but a 301 redirect is permanent. Therefore, when doing an A/B test that redirects users from the original URL to a variant URL, use a 302 redirect instead of a 301 redirect.

This informs online search tools, such as Google, that this is temporary — just while you’re performing the test — and that they should maintain the order of the first URL rather than the test URL.

JavaScript-based detours are also acceptable.

How can Brillmark help?

We are conversion rate optimization (CRO) professionals with years of expertise developing A/B, multivariate, and personalization studies across the customer lifecycle.

With over 10,000 campaigns under our belt, we’ve got you covered regardless of the device, application, platform, or complexity.

We will supply the technical competence when you develop your strategy and consistently provide perfect campaigns.

For instance, here are some of the tips during A/B Testing so that your A/B test will generate results:

While A/B testing may be a highly effective method of increasing conversions on your website, it should be used in conjunction with a larger user testing strategy. This should entail researching, analyzing data, and conducting user testing sessions.

Additionally, you may utilize these additional testing strategies to determine the optimal location for your A/B tests. Each A/B testing should begin with a hypothesis based on thoroughly understanding your website’s present condition.

You may discover that customers aren’t scrolling on a page where you require them to or that the exit rate on one page in your primary conversion route is exceptionally high.

Before doing any A/B testing on your site, you should determine the source of any issues rather than attempting to ‘correct’ problems that do not exist.

When doing A/B testing, do not rely on your results to be definitive; minor adjustments frequently result in little variations.

Additionally, allot ample time for testing to arrive at a decision, mainly if your website receives little traffic.

Avoid skewed test results in every manner possible, and always ‘test your tests’ to ensure they have been configured appropriately before running them.

Additionally, you should see any failed examinations as a learning opportunity.

If a concept does not work, learning which moves to avoid it will enhance the success of future testing.

With the help of Brillmark, you should strive to guarantee a 50/50 balance of your testing across all browsers and devices.

Reach out to us and let us assist you with the flawless implementation of A/B testing.